AI has transformed traditional A/B testing into a powerful tool for making data-driven decisions.

AI-powered A/B testing platforms analyze real-time data, identify complex patterns, and deliver instant insights that help businesses optimize their strategies with greater precision and speed.

Modern businesses need smarter ways to test and improve their digital experiences.

AI-driven testing approaches enable continuous experimentation and personalization. These methods move beyond simple split tests to create dynamic, user-focused experiences that adapt in real time.

Advanced AI technologies make A/B testing more efficient and predictive than ever before.

We can now process larger datasets, uncover hidden patterns, and make faster, more accurate decisions about what works best for our audiences.

Key Takeaways

- AI automation speeds up testing cycles and delivers more accurate results

- Real-time data analysis enables dynamic personalization and instant optimization

- Machine learning algorithms predict test outcomes before experiments conclude

Understanding AI-Driven A/B Testing

AI-powered A/B testing combines machine learning algorithms with traditional testing methods.

This approach uses real-time data analysis to detect patterns and predict outcomes with greater precision.

What Is AI-Driven A/B Testing?

AI-driven A/B testing uses artificial intelligence to analyze and optimize test variations automatically.

The AI system continuously monitors performance metrics and user behavior patterns.

Unlike manual testing, AI can process vast amounts of data at once and make quick adjustments based on emerging trends.

The system works by:

- Analyzing user interactions in real-time

- Identifying winning variations faster

- Automatically allocating traffic to better-performing versions

- Making data-driven predictions about test outcomes

Key Differences Between Traditional and AI-Powered A/B Testing

Traditional testing methods use fixed sample sizes and predefined test durations.

AI-powered testing adapts dynamically as data comes in.

Key advantages of AI testing include:

- Dynamic Traffic Allocation: Automatically shifts traffic to winning variants

- Pattern Recognition: Identifies subtle user behavior patterns

- Speed: Reaches statistical significance faster

- Complexity Management: Handles multiple variables simultaneously

Benefits of Integrating Artificial Intelligence

AI-powered testing platforms boost conversion rates through smarter decision-making and automated optimization.

The technology reduces human bias and testing time while improving accuracy.

Teams can test more variations simultaneously without sacrificing reliability.

Measurable benefits include:

- Up to 50% faster test completion times

- More accurate results through advanced statistical analysis

- Reduced resource requirements for test management

- Better personalization through machine learning insights

Fundamental Principles of AI-Driven Experimentation

AI-powered experimentation uses machine learning algorithms with traditional testing methods to deliver accurate and efficient results.

The technology helps identify winning variations faster and reduces the resources needed for testing.

The Role of Machine Learning in Testing Strategies

AI models enhance testing accuracy by analyzing large datasets and identifying patterns that humans might miss.

Machine learning algorithms process multiple variables at once to determine which factors influence test outcomes.

We use ML models to monitor test performance in real time and make quick adjustments when needed.

This dynamic approach helps optimize testing parameters as data comes in.

The key benefits include:

- Faster identification of winning variations

- Reduced testing time and resource costs

- More accurate performance predictions

- Better handling of complex multivariate tests

Predictive Analytics for Test Planning

AI-driven testing tools use historical data to forecast potential test outcomes.

This helps us prioritize which tests to run first based on their likelihood of success.

Predictive models analyze past experiments to:

- Estimate sample size requirements

- Calculate test duration

- Identify optimal traffic allocation

- Flag potential risks early

These insights let us plan more effective tests and avoid wasting resources on experiments unlikely to yield meaningful results.

Automated Hypothesis Generation

AI systems can now generate test ideas by analyzing user behavior patterns and market trends.

This helps create data-backed hypotheses instead of relying only on intuition.

The AI examines:

- User interaction data

- Previous test results

- Market research findings

- Competitor analysis

This systematic approach ensures each test has a solid foundation in data while still allowing for creative exploration of new ideas.

Machine learning models can predict which hypotheses are most likely to succeed and help prioritize testing efforts.

Designing Effective AI-Driven A/B Tests

AI-powered A/B testing requires careful planning and execution to generate reliable insights.

A strategic approach combining clear objectives, proper experimental design, and statistical rigor delivers the most valuable results.

Defining Clear Objectives and Metrics

We need to establish specific, measurable goals before starting any AI test.

Primary metrics should directly tie to business outcomes like revenue, conversions, or user engagement.

Key metrics to track:

- Conversion rate percentage

- Average order value

- User engagement time

- Click-through rate

- Bounce rate

Secondary metrics help provide context but should not distract from the main objective.

For example, if testing a new AI-powered product recommendation system, conversion rate might be our primary metric while average session duration serves as a supporting data point.

Creating Robust Experimental Designs

AI testing strategies demand careful control of variables.

We must isolate the specific element being tested to ensure valid results.

Test design elements to consider:

- Control group setup

- Variable isolation

- Test duration planning

- Device compatibility

- User segment definition

Each variation should test a single hypothesis.

Multiple changes make it impossible to determine which element caused the observed effects.

Sample Size and Traffic Allocation

Proper sample sizing ensures reliable results.

We calculate required sample size based on:

- Expected effect size

- Desired confidence level

- Baseline conversion rate

- Statistical power needed

Traffic allocation between variants affects test duration and reliability.

A 50/50 split often works best for two variants, but complex AI tests may require different distributions.

Ensuring Statistical Significance

Statistical significance proves results are not random chance.

We monitor key indicators:

Critical Metrics:

- P-value < 0.05

- Confidence level > 95%

- Margin of error < 3%

Early test termination can lead to false positives.

We must let tests run their full planned duration even if initial results look promising.

Small changes in conversion rates can have big impacts.

A 0.5% improvement might seem small but could mean significant revenue increases at scale.

AI-Based Personalization and Targeting

AI transforms how brands connect with users by analyzing behavior patterns and preferences.

Machine learning algorithms adapt content and recommendations in real time based on user signals.

Personalized User Experiences

AI-powered personalization helps us deliver unique experiences to each visitor.

We can customize everything from homepage layouts to checkout flows based on individual preferences and past behaviors.

The technology tracks clicks, purchases, and browsing patterns to build detailed user profiles.

This data lets us show relevant products and content to different audience segments.

We use AI testing and experimentation to optimize these personalized experiences.

The algorithms continuously learn which variations work best for specific user groups.

AI-Driven Content and Recommendations

Smart recommendation engines analyze user behavior to suggest relevant content and products.

We can deliver personalized blog posts, videos, and product recommendations that match each visitor’s interests.

Machine learning algorithms help us create dynamic content that adapts to user preferences.

The AI considers factors like:

- Past purchases and browsing history

- Time spent on different pages

- Geographic location

- Device type

- Time of day

This targeted approach leads to better engagement since users see content that matches their needs and interests.

The AI keeps learning and improving its recommendations over time.

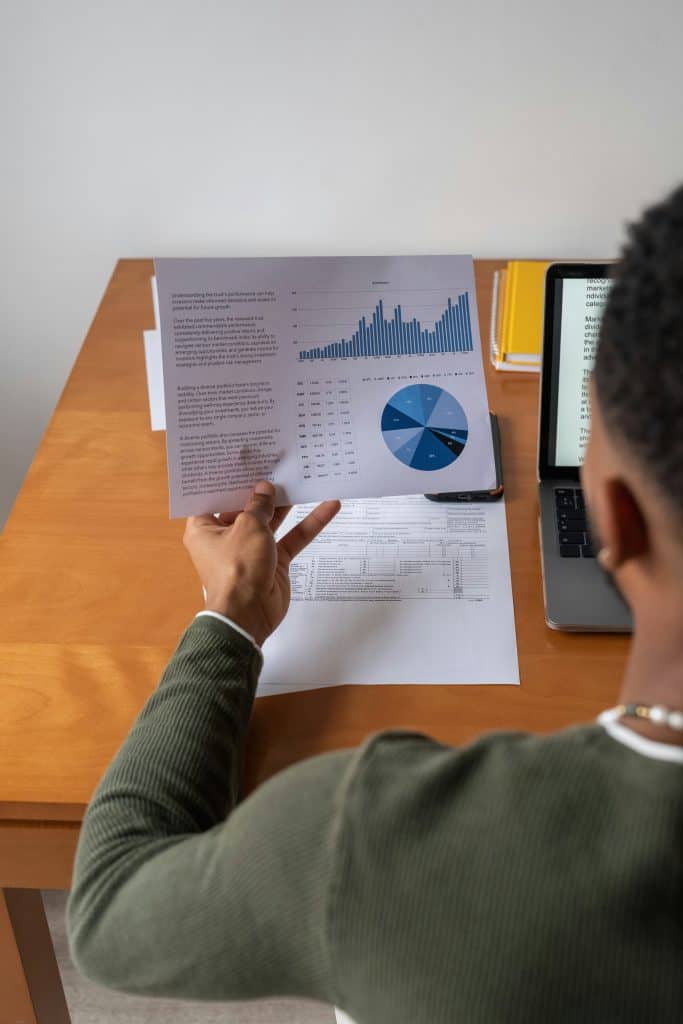

Data Collection, Analysis, and Measurement

AI-powered data analysis has changed how we gather insights from A/B tests.

Robust data collection methods and real-time analysis let us make faster, more accurate decisions.

Data Collection and Quality Assurance

We need clean, reliable data to make informed decisions.

AI-powered testing platforms help validate incoming data and flag anomalies automatically.

Quality data collection requires:

- Clear tracking parameters

- Proper event logging

- Data validation checks

- Consistent naming conventions

Historical data helps establish baselines.

We recommend storing at least 6 months of user behavior data to identify seasonal patterns and trends.

Real-Time Data Analysis with AI

AI systems process real-time data to spot patterns human analysts might miss.

This leads to faster decision-making and more precise results.

AI analyzes multiple variables at once:

- User behavior patterns

- Conversion rates

- Traffic fluctuations

- Device types

- Geographic locations

Machine learning models adapt to new data inputs continuously.

We can detect winning variations up to 60% faster compared to traditional methods.

Dynamic testing adjustments happen automatically based on incoming results.

This saves resources on failed experiments.

Actionable Insights and Data-Driven Decision-Making

AI-powered A/B testing generates valuable data that shapes strategic decisions and improves user experiences.

Data-driven insights lead to better performance and higher profitability when properly analyzed and applied.

Extracting Insights from Test Results

We focus on meaningful metrics that align with business goals when analyzing A/B test results.

Key performance indicators (KPIs) should track:

- Conversion rates

- User engagement levels

- Time spent on page

- Click-through rates

- Revenue impact

AI helps process complex data patterns and identifies statistically significant differences between test variants.

This allows us to spot meaningful trends and correlations.

We connect test results to specific user behaviors and preferences.

We can segment data by user demographics, device types, and interaction patterns.

Making Data-Driven Decisions

Companies that use AI and real-time analytics make faster, more accurate decisions about their products and features.

We establish clear decision-making frameworks that:

- Set confidence thresholds for statistical significance

- Define success metrics beforehand

- Account for seasonal variations

- Consider long-term impacts

Quick iteration cycles help us implement winning variants rapidly.

This creates a feedback loop of continuous improvement based on actual user data.

Leveraging Predictive Insights

Decision intelligence platforms combine AI and data visualization to forecast potential outcomes of different variants.

Machine learning models can predict:

- User behavior patterns

- Conversion probability

- Feature adoption rates

- Revenue impact

These predictions help us prioritize which tests to run next.

We can focus resources on experiments with the highest potential impact.

Smart automation lets us adjust test parameters in real-time based on early performance indicators.

Optimizing User Experience and Conversion Rates

AI-powered A/B testing gives us precise data about how users interact with digital products.

This testing helps create better experiences that keep users engaged and boost conversion rates through data-driven improvements.

Improving Engagement and Retention

AI-driven testing tools analyze user behavior patterns to identify what keeps people coming back.

We measure metrics like session duration, pages per visit, and feature usage to understand engagement levels.

Key engagement factors to test:

- Navigation flow and menu structure

- Content placement and readability

- Interactive elements and calls-to-action

- Mobile responsiveness and load times

We track retention by monitoring how often users return and which features they use most.

Testing different onboarding flows can increase new user activation by 20-30%.

Impact on Bounce and Click-Through Rates

Effective A/B testing strategies help us reduce bounce rates by testing page layouts, content structure, and loading speed.

Critical elements affecting click-through rates:

- Button placement and design

- Headline clarity and value proposition

- Visual hierarchy of page elements

- Trust indicators and social proof

Our tests show that optimizing these elements can reduce bounce rates by 15-25% and increase click-through rates by up to 40%.

User Feedback and Iterative Enhancement

We combine quantitative test data with qualitative user feedback to make informed improvements.

Common feedback collection methods:

- In-app surveys

- User interviews

- Heatmap analysis

- Session recordings

Testing variations based on user feedback helps us create more intuitive interfaces.

We prioritize changes that address common pain points and user suggestions.

Regular testing cycles let us measure the impact of each change and make quick adjustments when needed.

Advanced Techniques in AI-Driven Testing

AI testing platforms now offer sophisticated capabilities that go beyond basic A/B comparisons.

These modern tools use machine learning to analyze multiple variables, detect issues automatically, and measure performance with precision.

Multivariate and Personalized Testing

AI-powered testing platforms can analyze dozens of variables simultaneously, making multivariate testing more efficient than ever.

We can test different combinations of elements like buttons, images, and text all at once.

AI algorithms segment visitors based on behavior patterns and preferences.

This allows us to create personalized experiences for different user groups.

Key benefits of AI-driven multivariate testing:

- Faster testing cycles

- More accurate audience targeting

- Dynamic content adaptation

- Real-time optimization

Anomaly Detection and Consistency Checks

Advanced AI systems continuously monitor test data for irregularities and statistical anomalies.

The algorithms flag unusual patterns that might indicate problems with the test setup.

Quality checks run automatically to ensure:

- Data consistency across segments

- Statistical significance of results

- Sample size adequacy

- Test environment stability

Benchmarking Model Performance

We measure AI model effectiveness through specific metrics and KPIs.

The systems track conversion rates, user engagement, and revenue impact across different test variations.

Performance indicators to monitor:

- Conversion lift percentage

- Statistical confidence levels

- Learning curve efficiency

- Processing speed and resource usage

Real-time optimization allows the models to adjust test parameters automatically based on incoming data.

This improves accuracy over time.

AI Tools and Platforms for A/B Testing

Modern AI testing platforms have transformed how we run experiments by automating analysis and delivering faster, more accurate results.

These tools combine machine learning capabilities with traditional testing methods to optimize conversions and user experiences.

Overview of Leading AI Testing Tools

Optimizely leads the market with its intuitive interface and robust AI features for website testing.

The platform excels at processing large datasets and providing actionable insights.

Kameleoon’s predictive testing capabilities use AI to generate test variations automatically.

Their system analyzes past data to predict winning variations before tests complete.

Google Optimize integrates with other Google tools and offers basic AI features for small to medium businesses.

Its free tier makes it accessible for teams just starting with A/B testing.

Integrations with Existing CRO Workflows

AI-powered testing platforms connect directly with popular CRO tools and analytics systems.

This integration allows for smooth data flow between different marketing tools.

Teams can use ChatGPT and GPT models to generate test variations for headlines, copy, and calls-to-action.

These AI writers help create multiple versions quickly.

We can automate the entire testing process by connecting AI tools to:

- Content management systems

- Analytics platforms

- Email marketing software

- Customer data platforms

Automated Test Execution and Monitoring

Real-time data analysis helps identify winning variations faster than traditional methods.

AI monitors test performance continuously and alerts teams when statistical significance is reached.

Machine learning algorithms detect subtle patterns in user behavior that humans might miss.

This leads to more accurate test results and better optimization decisions.

Key automation features include:

- Dynamic traffic allocation

- Automated sample size calculations

- Fraud detection and data quality monitoring

- Multi-page funnel testing

- Personalization based on user segments

Challenges and Limitations of AI in A/B Testing

AI-powered testing tools face several technical constraints and operational challenges that can impact test results.

These range from data privacy concerns to sample size requirements and complex implementation issues that teams need to carefully navigate.

Common Pitfalls and External Variables

AI-driven testing systems can struggle with confounding variables that affect test outcomes.

Seasonal changes, marketing campaigns, and competitor actions can skew results if not properly controlled.

External factors like browser updates, device differences, and network conditions may interfere with AI’s ability to make accurate predictions.

We must account for these variables in our test design.

Teams often face challenges with test interference, where multiple experiments running simultaneously can affect each other’s results.

Setting up proper isolation and control groups becomes crucial.

Some AI systems may also show confirmation bias by favoring patterns that align with historical data, potentially missing new trends or user behaviors.

Privacy and User Data Considerations

Data privacy regulations require careful handling of user information during testing.

AI systems need substantial data to function effectively while respecting privacy laws.

We must implement strong data anonymization practices and ensure compliance with GDPR, CCPA, and other relevant regulations.

This can limit the depth of personalization and analysis possible.

User consent management becomes more complex with AI-driven tests.

Clear communication about data collection and usage is essential.

Addressing Small Sample Sizes and Latency

Small sample sizes can lead to unreliable AI predictions.

We need larger data sets to train models effectively and achieve statistical significance.

Test duration often needs to be longer to gather enough data for AI analysis.

This can slow down the optimization process and delay improvements.

Real-time processing of test data may cause latency issues.

Teams must balance the need for quick results with accurate analysis.

Recognizing the Complexity and Limitations

AI testing tools require specialized expertise to set up and maintain.

Not all teams have the technical skills needed to implement AI-driven testing properly.

Integration with existing systems can be challenging.

We need to ensure compatibility with current analytics tools and marketing platforms.

AI models may struggle with:

- Unexpected user behaviors

- New market conditions

- Rapid changes in customer preferences

- Complex multivariate scenarios

The cost of AI testing tools and infrastructure can be significant.

Teams need to evaluate ROI carefully before implementing these solutions.

Future Trends in AI-Driven A/B Testing

AI is transforming A/B testing with smarter automation, faster results, and deeper insights.

These advances help companies make better decisions through data-driven experiments and personalized customer experiences.

Emerging Techniques and Rapid Iteration

AI-powered testing tools now generate test variations automatically.

This reduces setup time from days to hours and includes creating different headlines, layouts, and calls-to-action.

We can now run multiple tests simultaneously while AI analyzes results in real-time.

This rapid iteration helps identify winning variations faster than traditional methods.

Machine learning algorithms adapt tests based on early results.

If a variation performs poorly, the system automatically directs more traffic to better-performing options.

Key Benefits:

- Automated variant creation

- Real-time performance analysis

- Dynamic traffic allocation

- Faster test completion rates

Predicting the Impact on Customer Experiences

AI-driven personalization lets us tailor content to specific user segments.

The system learns from user behavior and adjusts experiences automatically.

We can predict user preferences before running full tests.

AI analyzes historical data to forecast which changes will likely succeed with different audience segments.

Smart segmentation creates more relevant experiences by grouping users based on behavior patterns, preferences, and engagement levels.

Influence on Search Engine Results

AI testing tools now factor in SEO impact when evaluating variants.

This ensures changes improve user experience without hurting search rankings.

We track how test variations affect key metrics like page load speed, bounce rates, and time on site.

These metrics are crucial for search performance.

Machine learning models help predict how content changes might impact organic search visibility before implementation.

Frequently Asked Questions

AI-powered testing tools analyze data faster, make smarter decisions, and uncover hidden patterns that humans might miss.

These advanced capabilities help businesses make data-driven choices with greater confidence and accuracy.

What are the benefits of using AI in A/B testing compared to traditional methods?

AI-driven A/B testing tools can detect winning variations much faster than traditional methods.

They often identify results in just a few days instead of weeks.

AI testing platforms process larger amounts of data simultaneously while reducing human error in analysis and interpretation.

The automated systems run 24/7, continuously monitoring results and adjusting tests in real-time to maximize learning opportunities.

How can machine learning algorithms improve the accuracy of A/B test results?

Machine learning models analyze multiple variables at once to identify complex relationships and interactions.

Basic statistical methods might overlook these patterns.

The algorithms learn and adapt from each test.

They become more precise at predicting which variations will succeed based on historical data patterns.

What criteria should be used to select the appropriate AI-driven A/B testing software?

Look for platforms with proven track records of accurate predictions and user-friendly interfaces.

Choose tools that match your team’s technical expertise.

Check if the software integrates well with your existing tools.

It should handle your website’s traffic volume and data complexity.

The platform should offer clear reporting features and allow for customization of testing parameters.

In what ways can AI automate the A/B testing process for efficiency and effectiveness?

AI can generate test hypotheses and create multiple design variations automatically.

This saves significant time in the setup phase.

The system can monitor tests continuously.

It automatically stops underperforming variations to focus resources on promising options.

How do AI-driven A/B testing strategies handle the analysis of large and complex data sets?

AI systems process millions of data points simultaneously.

They identify patterns and correlations that would be impossible to spot manually.

Advanced algorithms can analyze real-time data and adjust test parameters on the fly to optimize performance.

Can AI in A/B testing predict customer behavior more effectively than manual testing approaches?

AI models analyze historical data and identify subtle patterns in customer interactions.

They use these insights to forecast user behavior.

These predictions help businesses anticipate which changes will likely succeed before running full tests.

AI systems learn from each test result and customer interaction, which increases their accuracy over time.